KORU

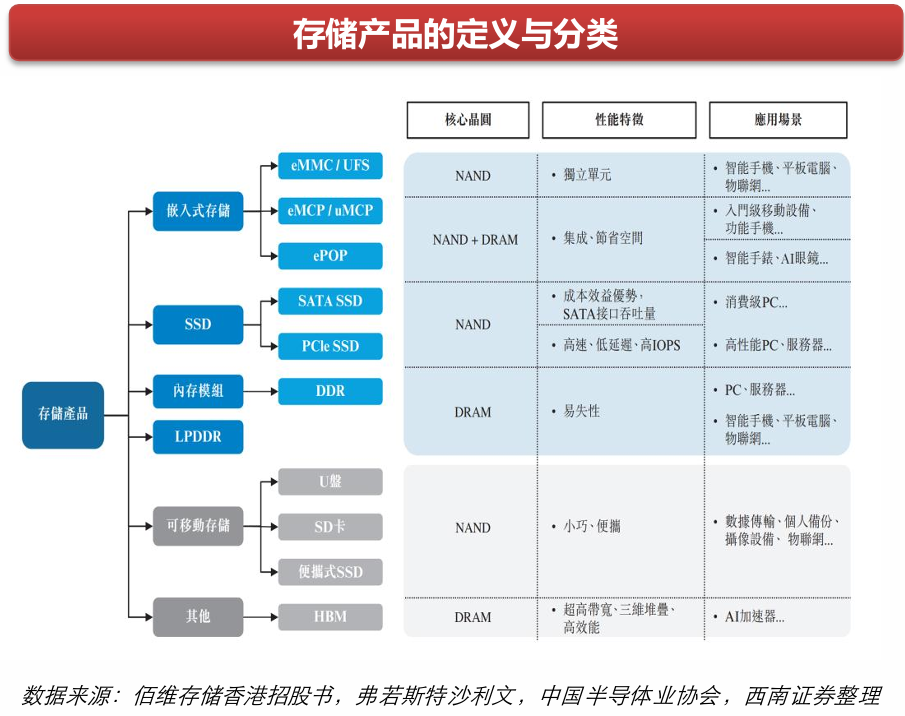

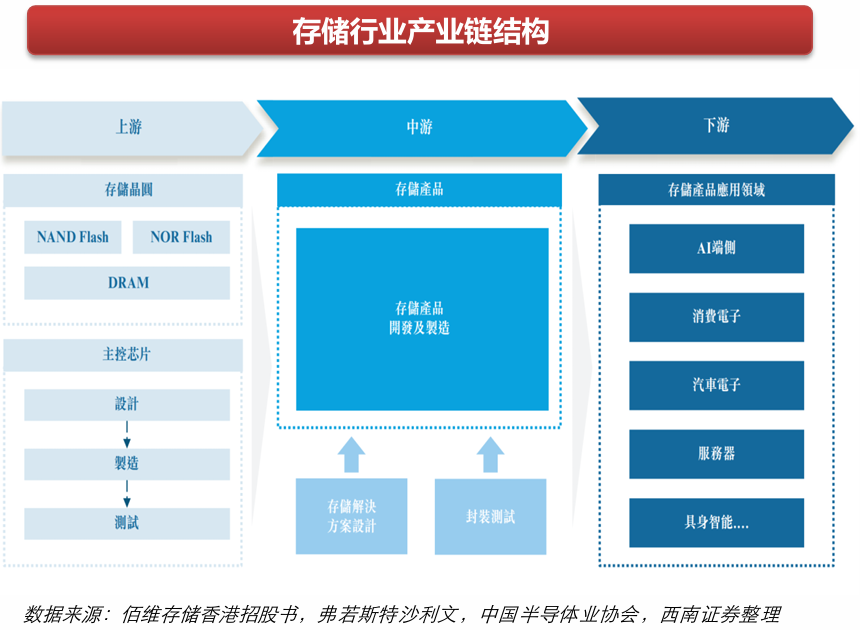

KORUMemory plays the role of the "operating desk" in AI, and it's the bottleneck link.

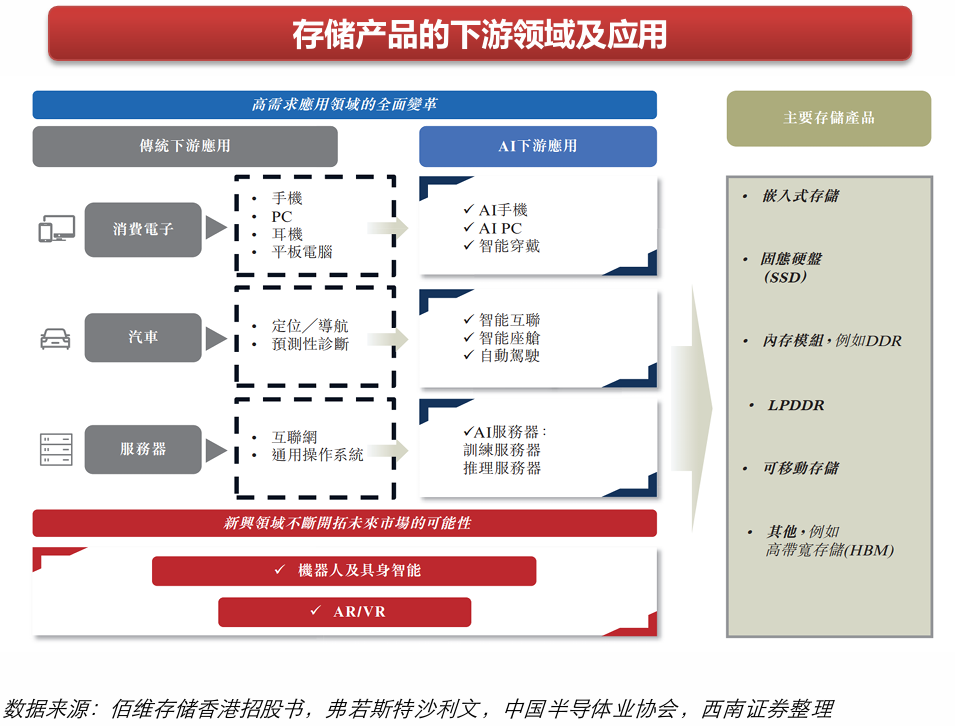

The core bottleneck in AI training and inference is not insufficient computing power, but rather the speed at which data is fed to the GPU is not fast enough. The GPU calculates extremely fast, but the memory bandwidth can't keep up, causing the GPU to idle and wait for data. This is why HBM (High Bandwidth Memory) has become the most in-demand component—it is stacked directly on the GPU chip, with bandwidth more than ten times higher than ordinary DDR5.

An H100 has 80GB of HBM with a bandwidth of 3.35TB/s. This specification is simply unattainable for ordinary DRAM; only Samsung, SK Hynix, and Micron can produce it, and production capacity is extremely limited.

Simply put: SSD determines how much data you can store, memory determines how fast the AI can run. The latter directly bottlenecks the ceiling of AI performance.

The copyright of this article belongs to the original author/organization.

The views expressed herein are solely those of the author and do not reflect the stance of the platform. The content is intended for investment reference purposes only and shall not be considered as investment advice. Please contact us if you have any questions or suggestions regarding the content services provided by the platform.